Intelligence - artificial?

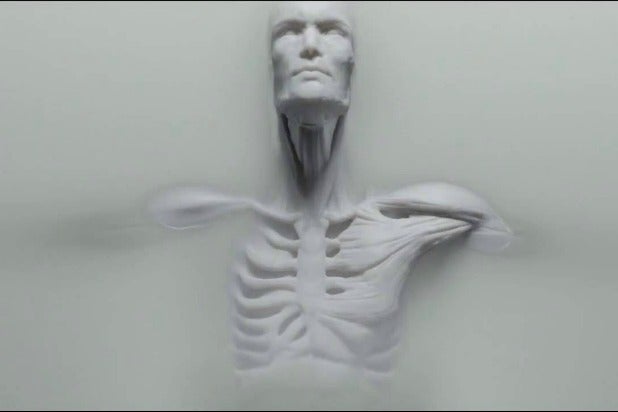

Perhaps it’s what happens when you binge watch Westworld on a day when you’ve been trying to make sense of a world gone crazy, but I’ve been thinking about AI and the future of civilisation. That somewhat weighty combination led me to a single thought that I can’t shake – what if, in fact, it is our intelligence that is artificial?

For decades, those in the know have talked about the singularity – a hypothesis that artificial super-intelligence will lead to a runaway transformation in life as we know it through self-perpetuating and accelerating technological advances. John van Neuman first articulated it – “The accelerating progress of technology and changes in the mode of human life, give the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, can not continue”.

It’s come to be used as a term meaning that AI reaches human levels of intelligence – that we are surpassed by the robots.

But what if we’re wrong – what if our insecurities about our supremacy on this planet are unfounded? What if, instead of AI surpassing us, the advances in our understanding of intelligence and cognition lead to the revelation that what we think of as human intelligence and free will, are in fact the emergent property of a complex set of algorithms? That there is no artificial super-intelligence to fear, but that we are actually flawed and imperfect biological AI. What if the singularity is not that robots/androids are smarter than us, but that we are dumber than we thought.

It’s not entirely implausible – after all psychologists have identified underlying heuristics and ‘rules’ that shape and govern our decision making. They may not be as simple as if X then do Y, and they may not occur in isolation – but there are things that guide us.

Human affairs, as we know them, will certainly change – what would be the implications? How would we handle such an almighty ego smash? What would that mean for the arc of history? Are we just pieces in a machine, with no real free will? Would personal responsibility cease to exist?